作者:Simon Erses 6 年以前

1997

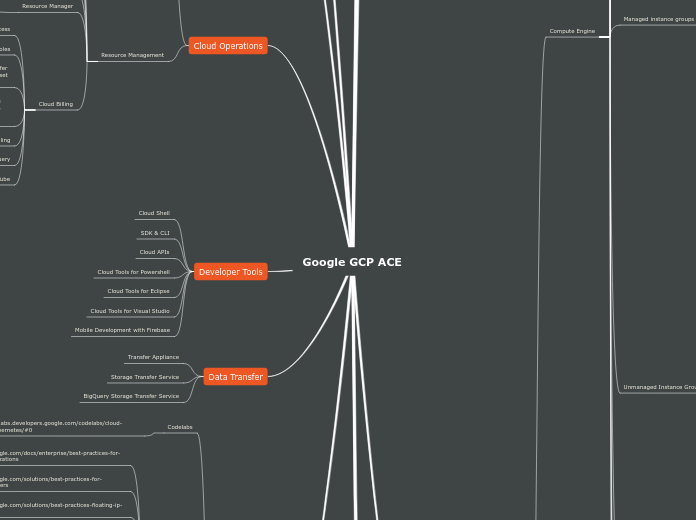

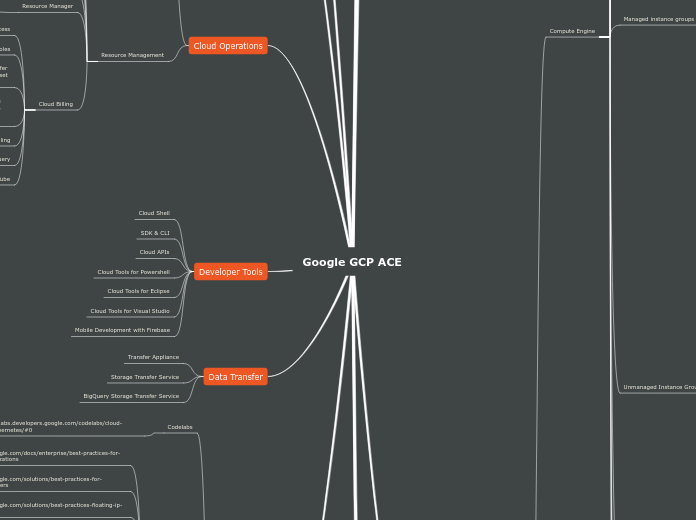

Google GCP ACE-2019

作者:Simon Erses 6 年以前

1997

更多类似内容

Devops(ReactiveOps)

https://medium.com/search?q=reactiveops

Kubernetes (various by Jon.Campos)

https://medium.com/google-cloud/search?q=Jonathan%20Campos

DecisionTrees(medium.com)

Storage Class/MLor SQL/Load Balancer/Identity Products

https://medium.com/google-cloud/some-more-gcp-flowcharts-dc0bb6f7c94e

TransferDataSets/Tags&Labels/FloatingIP/ScopingGKE/

https://medium.com/@grapesfrog/more-gcp-flowcharts-for-your-delectation-36b63ebb72ce

Compute/Storage/Network/EncryptionKeys/Authentication

https://medium.com/google-cloud/a-gcp-flowchart-a-day-2d57cc109401

https://www.draw.io/

https://online.visual-paradigm.com

https://www.youtube.com/watch?v=zYOgpo0SDBc

https://www.youtube.com/watch?v=iDiGBIOq7ec

Cloud-On-Air

Google 'One minute Videos'

Cloud-on-air (Google webinars)

Assignments

file:///C:/Users/simon/Desktop/Kaseys%20Theory/assignment-q1-10-gcp-eng-a-pe-102.pdf

Outline

https://docs.google.com/document/d/12SoOBMpEQVclCgdTJ10hVgnpTlI5A7GBNUv396u87KQ/edit

Practical Exam Prep

https://www.udemy.com/share/100roeBEcdd1pUQnQ=/

Hands on excercises

https://www.udemy.com/share/100sIKBEcdd1pUQnQ=/

https://acloud.guru/

https://www.coursera.org/

YouTube

https://youtu.be/KwgKPs5e7jQ

Billing and Big query

https://cloud.google.com/billing/docs/how-to/bq-examples

https://cloud.google.com/billing/docs/how-to/export-data-bigquery

Custom roles for billing

https://cloud.google.com/billing/docs/how-to/custom-roles

*Note: Although you link billing accounts to projects, billing accounts are not parents of projects in an Cloud IAM sense, and therefore projects don't inherit permissions from the billing account they are linked to.

*Note: Other legacy roles (such as Project Owner) also confer some billing permissions. The Project Owner role is a superset of Project Billing Manager.

Roles

Project Billing Manager

Link/unlink the project to/from a billing account

This role allows the user to attach the project to the billing account but does not grant any rights over resources . Project owners can use this role to allow someone else to manage the billing for the project without granting them resource access.

Level: organization or project

Billing account Viewer

View billing account cost information and transaction

Level: organisation or billing account

Billing account Viewer access would normally be granted to the billing account, but does not grant any rights over resources. Project owners can use this role to allow someone to manage the billing for the project without granting them access to resources

Billing account user

Link projects to billing accounts

Level: organisation or billing

This role has very restricted permissions, so you can grant it broadly, typically in combination with Project Creator. These two roles allow a user to create new projects linked to the billing account on which the role is granted.

Billing account administrator

Manage billing accounts (not create)

Level: Organization or Billing account

This role is an owner role for a billing account. Use it to manage payment instruments, configure billing exports, view cost information, link and unlink projects and manage other user roles on the billing account.

Billing account creator

Create new self serving billing accounts

Level: Organisation

Tip: Minimize the number of users who have this role to help prevent proliferation of untracked cloud spend in your organization.

Users must have this role to sign up for GCP with a credit card using their corporate identity.

Use this role for initial billing setup or temporarily to create a billing account in a different currency.

https://cloud.google.com/billing/docs/how-to/billing-access

Creating&Managing Labels

https://cloud.google.com/resource-manager/docs/creating-managing-labels

NOTE: For a given reporting service and project, the number of distinct key-value pair combinations that will be preserved within a one-hour window is 1,000. For example, the Compute Engine service reports metrics on virtual machine (VM) instances. If you deploy a project with 2,000 VMs, each with a distinct label, the service reports metrics are preserved for only the first 1,000 labels that exist within the one-hour window.

Services supporting Labels

https://cloud.google.com/resource-manager/docs/creating-managing-labels#label_support

Requirements for Labels

Keys must start with a lowercase letter or international character.

The key portion of a label must be unique. However, you can use the same key with multiple resources.

Keys and values can contain only lowercase letters, numeric characters, underscores, and dashes. All characters must use UTF-8 encoding, and international characters are allowed.

Keys have a minimum length of 1 character and a maximum length of 63 characters, and cannot be empty. Values can be empty, and have a maximum length of 63 characters.

Each label must be a key-value pair.

Each resource can have multiple labels, up to a maximum of 64.

Common uses

Virtual machine labels: A label can be attached to a virtual machine. Virtual machine tags that you defined in the past will appear as a label without a value.

State labels: For example, state:active, state:readytodelete, and state:archive.

Environment or stage labels: For example, environment:production and environment:test.

Component labels: For example, component:redis, component:frontend, component:ingest, and component:dashboard.

Team or cost center labels: Add labels based on team or cost center to distinguish instances owned by different teams (for example, team:research and team:analytics). You can use this type of label for cost accounting or budgeting.

A label is a key-value pair that helps you organize your Google Cloud Platform instances. You can attach a label to each resource, then filter the resources based on their labels. Information about labels is forwarded to the billing system, so you can break down your billing charges by label.

Targets for firewall rules

Every firewall rule in GCP must have a target which defines the instances to which it applies. The default target is all instances in the network, but you can specify instances as targets using either target tags or target service accounts.

Egress rules apply to traffic leaving your VPC network. For egress rules, the targets are source VMs in GCP.

Ingress rules apply to traffic entering your VPC network. For ingress rules, the targets are destination VMs in GCP.

https://cloud.google.com/vpc/docs/add-remove-network-tags

You can only add network tags to VM instances or instance templates. You cannot tag other GCP resources. You can assign network tags to new instances at creation time, or you can edit the set of assigned tags at any time later. Network tags can be edited without stopping an instance.

Network tags are text attributes you can add to Compute Engine virtual machine (VM) instances. Tags allow you to make firewall rules and routes applicable to specific VM instances.

https://cloud.google.com/vpc/images/firewalls/firewall_overview_egress_examples.svg

An egress rule with priority 1000 is applicable to VM 3. This rule blocks its outgoing TCP traffic to any destination in the 192.168.1.0/24 IP range. Even though ingress rules for VM 4 permit all incoming traffic, VM 3 cannot send TCP traffic to VM 4. However, VM 3 is free to send UDP traffic to VM 4 because the egress rule only applies to the TCP protocol. Additionally, VM 3 can send any traffic to other instances in the VPC network outside of the 192.168.1.0/24 IP range, as long as those other instances have ingress rules to permit such traffic. Because it does not have an external IP address, it has no path to send traffic outside of the VPC network.

An egress rule with priority 1000 is applicable to VM 2. This rule denies all outgoing traffic to all destinations (0.0.0.0/0). Outgoing traffic to other instances in the VPC is blocked, regardless of the ingress rules applied to the other instances. Even though VM 2 has an external IP address, this firewall rule blocks its outgoing traffic to external hosts on the Internet.

VM 1 has no specified egress firewall rule, so the implied allow egress rule rule lets it send traffic to any destination. Connections to other instances in the VPC network are allowed, subject to applicable ingress rules for those other instances. VM 1 is able to send traffic to VM 4 because VM 4 has an ingress rule allowing incoming traffic from any IP address range. Because VM 1 has an external IP address, it is able to send traffic to external hosts on the Internet. Incoming responses to traffic sent by VM 1 are allowed because firewall rules are stateful.

https://cloud.google.com/vpc/images/firewalls/firewall_overview_ingress_examples.svg

An ingress rule with priority 1000 is applicable to VM 3. This rule allows TCP traffic from instances in the network with the network tag client, such as VM 4. TCP traffic from VM 4 to VM 3 is allowed because VM 4 has no egress rule blocking such communication (only the implied allow egress rule is applicable). Because VM 3 does not have an external IP, there is no path to it from external hosts on the Internet.

VM 2 has no specified ingress firewall rule, so the implied deny ingress rule rule blocks all incoming traffic. Connections from other instances in the network are blocked, regardless of egress rules for the other instances. Because VM 2 has an external IP, there is a path to it from external hosts on the Internet, but the implied deny rule blocks external incoming traffic as well.

An ingress rule with priority 1000 is applicable to VM 1. This rule allows incoming TCP traffic from any source (0.0.0.0/0). TCP traffic from other instances in the VPC network is allowed, subject to applicable egress rules for those other instances. VM 4 is able to communicate with VM 1 over TCP because VM 4 has no egress rule blocking such communication (only the implied allow egress rule is applicable). Because VM 1 has an external IP, this rule also permits incoming TCP traffic from external hosts on the Internet.

Implied rules cannot be removed

Implied deny egress

An ingress rule whose action is deny, source is 0.0.0.0/0, and priority is the lowest possible (65535) protects all instances by blocking incoming traffic to them. Incoming access may be allowed by a higher priority rule. Note that the default network includes some additional rules that override this one, allowing certain types of incoming traffic.

Implied allow egress

An egress rule whose action is allow, destination is 0.0.0.0/0, and priority is the lowest possible (65535) lets any instance send traffic to any destination, except for traffic blocked by GCP. Outbound access may be restricted by a higher priority firewall rule. Internet access is allowed if no other firewall rules deny outbound traffic and if the instance has an external IP address or uses a NAT instance.

https://cloud.google.com/vpc/docs/firewalls#alwaysallowed

A local metadata server alongside each instance at 169.254.169.254. This server is essential to the operation of the instance, so the instance can access it regardless of any firewall rules you configure. The metadata server provides the following basic services to the instance:

NTP : Network Time Protocol

Instance metadata

DNS resolution, following the name resolution order for the VPC network. Unless you have configured an alternative name server, DNS resolution includes looking up Compute Engine Internal DNS, querying Cloud DNS zones, and public DNS names

DHCP

Egress traffic on TCP port 25 (SMTP) Traffic from:

• instances to other instances addressed by external IP address

• instances to the Internet

Protocols other than TCP, UDP, ICMP, and IPIP Traffic between:

• instances if a load balancer with an external IP address is involved

• instances if they are addressed with external IP addresses

• instances and the Internet

GRE traffic:

All sources, all destinations, including among instances using internal IP addresses

https://cloud.google.com/vpc/docs/firewalls#blockedtraffic

https://cloud.google.com/vpc/docs/using-firewalls

https://cloud.google.com/vpc/docs/firewalls

Firewall rules cannot allow traffic in one direction while denying the associated return traffic.

GCP firewall rules are stateful.

When you create a firewall rule, you must select a VPC network. While the rule is enforced at the instance level, its configuration is associated with a VPC network. This means you cannot share firewall rules among VPC networks, including networks connected by VPC Network Peering or by using Cloud VPN tunnels.

Each firewall rule applies to incoming (ingress) or outgoing (egress) traffic, not both.

Each firewall rule's action is either allow or deny. The rule applies to traffic as long as it is enforced. You can disable a rule for troubleshooting purposes, for example.

Firewall rules only support IPv4 traffic. When specifying a source for an ingress rule or a destination for an egress rule by address, you can only use an IPv4 address or IPv4 block in CIDR notation.

*https://cloud.google.com/iam/docs/job-functions/networking

*https://cloud.google.com/iam/docs/job-functions/billing

*https://cloud.google.com/iam/docs/using-iam-securely

*https://cloud.google.com/iam/docs/understanding-service-accounts

*https://cloud.google.com/iam/docs/resource-hierarchy-access-control

https://cloud.google.com/iam/docs/service-accounts

https://cloud.google.com/iam/docs/concepts

Link

https://cloud.google.com/compute/docs/instances/managing-instance-access

https://cloud.google.com/compute/docs/oslogin/

Ability to import existing Linux accounts - Administrators can choose to optionally synchronize Linux account information from Active Directory (AD) and Lightweight Directory Access Protocol (LDAP) that are set up on-premises. For example, you can ensure that users have the same user ID (UID) in both your Cloud and on-premises environments.

Automatic permission updates - With OS Login, permissions are updated automatically when an administrator changes Cloud IAM permissions. For example, if you remove IAM permissions from a Google identity, then access to VM instances is revoked. Google checks permissions for every login attempt to prevent unwanted access.

Fine grained authorization using Google Cloud IAM - Project and instance-level administrators can use IAM to grant SSH access to a user's Google identity without granting a broader set of privileges. For example, you can grant a user permissions to log into the system, but not the ability to run commands such as sudo. Google checks these permissions to determine whether a user can log into a VM instance.

Automatic Linux account lifecycle management - You can directly tie a Linux user account to a user's Google identity so that the same Linux account information is used across all instances in the same project or organization.

Use OS Login to manage SSH access to your instances using IAM without having to create and manage individual SSH keys

Service accounts

In addition to being an identity, a service account is a resource which has IAM policies attached to it. These policies determine who can use the service account.

Custom Roles

The 'ETAG' property is used to verify if the custom role has changed since the last request. When you make a request to Cloud IAM with an etag value, Cloud IAM compares the etag value in the request with the existing etag value associated with the custom role. It writes the change only if the etag values match.

https://cloud.google.com/iam/docs/creating-custom-roles

https://cloud.google.com/iam/docs/understanding-custom-roles

In addition to the predefined roles, Cloud IAM also provides the ability to create customized Cloud IAM roles. You can create a custom Cloud IAM role with one or more permissions and then grant that custom role to users who are part of your organization

Predefined roles

In addition to the primitive roles, Cloud IAM provides additional predefined roles that give granular access to specific Google Cloud Platform resources and prevent unwanted access to other resources.

Primitive roles

Viewer

Editor

Owner

There are three roles that existed prior to the introduction of Cloud IAM: Owner, Editor, and Viewer. These roles are concentric; that is, the Owner role includes the permissions in the Editor role, and the Editor role includes the permissions in the Viewer role.

'Metadata-Flavor: Google' must be used used in all query headers

Default Project & Instance Metadata

https://cloud.google.com/compute/docs/storing-retrieving-metadata#default

Vertical Pod Autoscaler (VPA) (currently NOT GA)

https://github.com/kubernetes/autoscaler/tree/master/vertical-pod-autoscaler

Cluster {NODE} Autoscaler (CA)

The cluster autoscaler handles autoscaling of nodes only. The cluster autoscaler is triggered by the pods assigned the pending status, upon identification it willl create a new node to service the pod creation.

Horizontal Pod Autoscaler (HPA)

If the cluster is maxxed out and no new pods can be created the required nodes are placed in 'pending' status (not live) due to lack of resources.

The HPA Monitors pods (which must have respource requests) if the hpa identifies that resources are being exceeded it modifies the replica count in the deployment.yml to amend the number of pods.

Stateful sets

Use for ordered pod creation for stateful applications

Daemon sets

A single pod on every node to run background tasks

Deployments

Let you scale up and down with demand

https://cloud.google.com/solutions/heterogeneous-deployment-patterns-with-kubernetes

https://cloud.google.com/kubernetes-engine/docs/concepts/persistent-volumes

https://blog.purestorage.com/the-kubernetes-storage-eco-system-explained/

Dynamic Provisioning w/ Storage Classes

Applied using a yaml file

Provisoner is k8s name for the CSI plugin which allows the admin to connect to multiple pre-provisioned storage rescources. (S3/NAS/NFS/GCP Buckets)

PV Subsystem

Storageclass (SC) [Makes it Dynamic]

Container storage interface (CSI) via a plugin/connector which consumes from the PV Subsystem. This is opensource, the storage providers write their own plugins for the PV subsystem.

Storageclass (SC)

PersistentVolumeClaim(PVC) ['Token' to use PV]

Persistent Volume (PV) Storage resource ie 20GB SSD volume

Back end strorage services (S3, GCP Bucket, Enterprise array connect to

Node Network

Pod Network

Service network

kube-proxy

commands

kubectl ger rs -o wide (rs=replicasets)

kubectl get sc [StorageClassName]

kubectl get sc

kubectl get pvc

kubetctl get pv

kubectl apply -f ./simple-web.yml

kubectl get svc --watch

kubectl get svc

kubectl apply -f ./ping-deploy.yml

kubectl exec -it [podname] bash

apt-get install iputils-ping curl dnsutils iproute2 -y

kubectl get pods -o wide

kubectl get nodes -o jsonpath='{.items[*].spec.podCIDR}'

kubectl get deploy

kubectl get hpa --namespace [thenamespace]

kubectl get deploy --namespace [thenamespace]

kubectl rollout undo deploy [deploymentname]

The best way to rollback is to update the deployment.yml and repost the deployment.

NOTE! rollback is an imperitive command which does not restore the deployment.yaml which is dellaritive and so there is a risk that kubernetes will redeploy the configuration as defined in the deployment.yaml

kubectl rollout history deploy [deploymentname]

kubectl apply --fielname=[filename].yml --record=true

adds annotation to the deployment object

kubectl describe [nodes] [pods] [deploy]

kubectl get [nodes] [pods]

https://cloud.google.com/appengine/docs/

https://cloud.google.com/appengine/

https://cloud.google.com/appengine/docs/the-appengine-environments

Review the appengine comparisons!

Flexible

Flexible optimal for

Applications where:-

It accesses the resources or services of your Google Cloud Platform project that reside in the Compute Engine network.

It uses or depends on frameworks that include native code.

It runs in a Docker container that includes a custom runtime or source code written in other programming languages.

Python, Java, Node.js, Go, Ruby, PHP, or .NET

Source code is written in a version of any of the supported programming languages:

Applications that receive consistent traffic, experience regular traffic fluctuations, or meet the parameters for scaling up and down gradually

Application instances run within Docker containers on Compute Engine virtual machines

Standard

Standard optimal for (applications)

Intended to run for free or v.low cost

With Sudden and extreme spikes of traffic which require immediate scaling

Written in specific versions of the supported programming languages

Go 1.9, Go 1.11, and Go 1.12 (beta)

PHP 5.5, and PHP 7.2

Node.js 8, and Node.js 10

Java 8

Python 2.7, Python 3.7

Applications instances run in a sandbox, using the runtime environment of a supported language

https://cloud.google.com/compute/docs/autoscaler/scaling-stackdriver-monitoring-metrics

Scale using per-group metrics (Beta) where the group scales based on a metric that provides a value related to the whole managed instance group.

Scale using per-instance metrics where the selected metric provides data for each instance in the managed instance group indicating resource utilization.

You can create custom metrics using Stackdriver Monitoring and write your own monitoring data to the Stackdriver Monitoring service. This gives you side-by-side access to standard Cloud Platform data and your custom monitoring data, with a familiar data structure and consistent query syntax. If you have a custom metric, you can choose to scale based on the data from these metrics.

Stackdriver Monitoring has a set of standard metrics that you can use to monitor your virtual machine instances. However, not all standard metrics are a valid utilization metric that the autoscaler can use.

A valid utilization metric for scaling meets the following criteria:

The standard metric describes how busy an instance is, and the metric value increases or decreases proportionally to the number of virtual machine instances in the group.

The standard metric must contain data for a gce_instance monitored resource. You can use the timeSeries.list API call to verify whether a specific metric exports data for this resource.

Autoscaler cannot perform autoscaling when there is a backup target pool attached to the primary target pool because when the autoscaler scales down, some instances will start failing health checks from the load balancer. If the number of failed health checks reaches the failover ratio, the load balancer will start redirecting traffic to the backup target pool, causing the utilization of the managed instance group in the primary target pool to drop to zero. As a result, the autoscaler won't be able to accurately scale the managed instance group in the primary target pool. For this reason, we recommend that you do not assign a backup target pool when using autoscaler.

You can autoscale a managed instance group that is part of a network load balancer target pool using CPU utilization or custom metrics. For more information, see Scaling Based on CPU utilization or Scaling Based on Stackdriver Monitoring Metrics.

A network load balancer distributes load using lower-level protocols such as TCP and UDP. Network load balancing lets you distribute traffic that is not based on HTTP(S), such as SMTP.

https://cloud.google.com/compute/docs/autoscaler/scaling-cpu-load-balancing#scaling_based_on_https_load_balancing_serving_capacity

What is a back end service?

https://cloud.google.com/load-balancing/images/basic-http-load-balancer.svg

The backend service performs various functions, such as:

Maintaining session affinity

Monitoring backend health according to a health check

Directing traffic according to a balancing mode

A backend service directs traffic to backends, which are instance groups or network endpoint groups

A backend service is a resource with fields containing configuration values for the following GCP load balancing services:

Internal Load Balancing

TCP Proxy Load Balancing

SSL Proxy Load Balancing

HTTP(S) Load Balancing

You can define the load balancing serving capacity of the instance groups associated with the backend as maximum CPU utilization, maximum requests per second (RPS), or maximum requests per second of the group

An HTTP(S) load balancer spreads load across backend services, which distributes traffic among instance groups.

https://cloud.google.com/compute/docs/instance-groups/#unmanaged_instance_groups

Unmanaged instance groups can contain heterogeneous instances that you can arbitrarily add and remove from the group. Unmanaged instance groups do not offer autoscaling, auto-healing, rolling update support, or the use of instance templates and are not a good fit for deploying highly available and scalable workloads. Use unmanaged instance groups if you need to apply load balancing to groups of heterogeneous instances or if you need to manage the instances yourself.

https://cloud.google.com/compute/images/mig-overview.svg

Benefits

Automated updates

Demo of MIG capabilities(YouTube)

https://youtu.be/uaoauF5p7gw

By default, instances in the group will be placed in the default network and randomly assigned IP addresses from the regional range. Alternatively, you can restrict the IP range of the group by creating a custom mode VPC network and subnet that uses a smaller IP range, then specifying this subnet in the instance template.

Containers

You can simplify application deployment by deploying containers to instances in managed instance groups. When you specify a container image in an instance template and then use that template to create a managed instance group, each instance will be created with a container-optimized OS that includes Docker, and your container will start automatically on each instance in the group. See Deploying containers on VMs and managed instance groups.

Groups of preemptible instances:

For workloads where minimal costs are more important than speed of execution, you can reduce the cost of your workload by using preemptible VM instances in your instance group. Preemptible instances last up to 24 hours, and are preempted gracefully - your application will have 30 seconds to exit correctly. Preemptible instances can be deleted at any time, but autohealing will bring the instances back when preemptible capacity becomes available again.

Safely deploy new versions of software to instances in a managed instance group. The rollout of an update happens automatically based on your specifications: you can control the speed and scope of the update rollout in order to minimize disruptions to your application. You can optionally perform partial rollouts which allows for canary testing

Scalability

Managed instance groups support autoscaling that dynamically adds or removes instances from a managed instance group in response to increases or decreases in load. You turn on autoscaling and configure an autoscaling policy to specify how you want the group to scale. Autoscaling policies include scaling based on CPU utilization, load balancing capacity, Stackdriver monitoring metrics, or, for zonal MIGs, by a queue-based workload like Google Cloud Pub/Sub.

High Availability

Loadbalancing

GCP load balancing can use instance groups to serve traffic. Depending on the type of load balancer you choose, you can add instance groups to a target pool or to a backend service.

Regional or Zonal Groups

A regional managed instance group, which deploys instances to multiple zones across the same region.

A zonal managed instance group deploys instances to a single zone

Autohealing - in 'running state'

Each instance in a MIG created from instance template

Suitable for stateless workloads( ie frontends, Batch workloads, high throughput)

Autoscaling policy and target utilization

https://cloud.google.com/compute/docs/load-balancing-and-autoscaling#policies

To create an autoscaler, you must specify the autoscaling policy and a target utilization level. You can choose to scale using the following policies:

Stackdriver Monitoring metrics

HTTP load balancing serving capacity, which can be based on either utilization or requests per second.

Average CPU utilization

Autoscaling is a feature of managed instance groups. A managed instance group is a pool of homogeneous instances, created from a common instance template. An autoscaler adds or deletes instances from a managed instance group. Although Compute Engine has both managed and unmanaged instance groups, only managed instance groups can be used with autoscaler.

Use the 'addresses list' sub-command to list static IP addresses available to project

Only one resource at a time can use a static internal IP address

Reserve up to 200 static internal IP addresses per region by default

You cannot 'change' the internal IP address of an existing resource

Opt 2: Promote an existing address

Opt 1: Reserve a new static IP & assign to a new VM

https://cloud.google.com/compute/docs/ip-addresses/reserve-static-internal-ip-address

Custom Extensions

A Valid machine type = 32 CPUs with 29GB RAM

The Total Memory must be a multiple of 256mb

Memory must be between 0.9GB 9(min) & 6.5GB (max) per CPU

Only machine types with 1 CPU or an even number of CPUs can be created

The Max CPUs available may vary depending on the zone and machine types therein

custom-memory

Custom-CPU

Custom machine types let you tweak the the pre-defined types, but you can add more RAM per CPU than you get with the 'highmem' machine types unless you use 'extended-memory'

Pre-defined Machine types are named by their CPU counts which are always powers of 2

Hi-cpu : 0.90 GB of memory per vCPU

Hi-mem : 6.50GB of memory per vCPU

Standrd : 3.75 GB of memory per vCPU

Updating managed instance groups

https://cloud.google.com/compute/docs/instance-groups/updating-managed-instance-groups

Stopped instance

https://cloud.google.com/compute/docs/instances/changing-machine-type-of-stopped-instance

https://cloud.google.com/compute/docs/

https://cloud.google.com/compute/

https://cloud.google.com/compute/docs/nodes/

https://cloud.google.com/compute/docs/instances/windows/

https://cloud.google.com/compute/docs/instance-groups/

https://cloud.google.com/compute/docs/instance-templates/

https://cloud.google.com/compute/docs/instances/preemptible

https://cloud.google.com/compute/docs/instances/live-migration

https://cloud.google.com/compute/docs/images

https://cloud.google.com/compute/docs/gpus

https://cloud.google.com/compute/docs/cpu-platforms

https://cloud.google.com/compute/docs/machine-types

https://cloud.google.com/compute/docs/instances/instance-life-cycle

https://cloud.google.com/compute/docs/instances/

https://cloud.google.com/compute/docs/launch-checklist

CloudNext19DeepDive

https://youtu.be/HUHBq_VGgFg

https://cloud.google.com/load-balancing/docs/load-balancing-overview#global_versus_regional_load_balancing

Cross-region overflow and failover

Load balancing for cloud storage

Monitoring and logging

Connection draining

IPv6 and IPv4 client termination

IP address and cookie-based affinity

Instances globally distributed

Single anycast IP address

HTTPS, HTTP, or TCP/SSL

Autoscaling

IPv4 only

Session affinity

Health checks

Single IP address per region

Instances in one region

UDP or TCP/SSL traffic

Internal TCP/UDP Load Balancing

https://cloud.google.com/load-balancing/docs/choosing-load-balancer

Traffic type

Network TCP/UDP

TCP Proxy

HTTPS

SSL

External vs internal?

Global vs Regional?

https://cloud.google.com/vpc/images/private-services-access-ranges.svg

https://cloud.google.com/vpc/docs/vpc

https://cloud.google.com/vpc/

CIDR Notation (YouTube)

https://youtu.be/86RDE_bP1Bs

https://youtu.be/iDiGBIOq7ec

https://cloud.google.com/vpc/docs/alias-ip

Google Cloud Platform (GCP) alias IP ranges let you assign ranges of internal IP addresses as aliases to a virtual machine's (VM) network interfaces. This is useful if you have multiple services running on a VM and you want to assign each service a different IP address.

VPC Network Peering

Restrictions:

**overlapping subnets

When a VPC subnet is created or a subnet IP range is expanded, GCP performs a check to make sure the new subnet range does not overlap with IP ranges of subnets in the same VPC network or in directly peered VPC networks. If it does, the creation or expansion action fails.

Peering will not be established if there are overlapping subnets at time of peering

By default, VPC Network Peering with GKE is supported when used with IP aliases. If you don't use IP aliases, you can export custom routes so that GKE containers are reachable from peered networks.

Compute Engine internal DNS names created in a network are not accessible to peered networks. Use the IP address to reach the VM instances in peered network.

You cannot use a tag or service account from one peered network in the other peered network.

Only directly peered networks can communicate. Transitive peering is not supported. In other words, if VPC network N1 is peered with N2 and N3, but N2 and N3 are not directly connected, VPC network N2 cannot communicate with VPC network N3 over VPC Network Peering.

You can include static and dynamic routes by enabling exporting and importing custom routes on your peering connections. Both sides of the connection must be configured to import or export custom routes. For more information, see Importing and exporting custom routes.

Internal load balanced IPs in all subnets

Virtual machine (VM) internal IPs in all subnets

You can't disable the subnet route exchange or select which subnet routes are exchanged. After peering is established, all resources within subnet IP addresses are accessible across directly peered networks. VPC Network Peering doesn't provide granular route controls to filter out which subnet CIDR ranges are reachable across peered networks. You must use firewall rules to filter traffic if that's required. The following types of endpoints and resources are reachable across any directly peered networks:

Only VPC networks are supported for VPC Network Peering. Peering is NOT supported for legacy networks.

A dynamic route can overlap with a subnet route in a peer network. For dynamic routes, the destination ranges that overlap with a subnet route from the peer network are silently dropped. GCP uses the subnet route.

When you create a new subnet in a peered VPC network

When you create a static route in a peered VPC network

When you peer VPC networks for the first time

A subnet CIDR range in one peered VPC network cannot overlap with a static route in another peered network. This rule covers both subnet routes and static routes. GCP checks for overlap in the following circumstances and generates an error when an overlap occurs.

Key Properties

Billing policy for peering traffic is the same as the billing policy for private traffic in same network.

Peering traffic (traffic flowing between peered networks) has the same latency, throughput, and availability as private traffic in the same network.

IAM permissions for creating and deleting VPC Network Peering are included as part of the project owner, project editor, and network admin roles.

A given VPC network can peer with multiple VPC networks, but there is a limit.

Subnet and static routes are global. Dynamic routes can be regional or global, depending on the VPC network's dynamic routing mode.

VPC peers always exchange all subnet routes. You can also exchange custom routes (static and dynamic routes), depending on whether the peering configurations have been to configured to import or export them.

Peering and the option to import and export custom routes can be configured for one VPC network even before the other VPC network is created.

Each side of a peering association is set up independently. Peering will be active only when the configuration from both sides matches. Either side can choose to delete the peering association at any time.

Peered VPC networks remain administratively separate. Routes, firewalls, VPNs, and other traffic management tools are administered and applied separately in each of the VPC networks.

VPC Network Peering works with Compute Engine, GKE, and App Engine flexible environment.

Adavantages:

Network Cost: GCP charges egress bandwidth pricing for networks using external IPs to communicate even if the traffic is within the same zone. If however, the networks are peered they can use internal IPs to communicate and save on those egress costs. Regular network pricing still applies to all traffic

Network Security: Service owners do not need to have their services exposed to the public Internet and deal with its associated risks.

Network Latency: Public IP networking suffers higher latency than private networking. All peering traffic stays within Google's network.

Useful for:

Organizations with several network administrative domains can peer with each other.

SaaS (Software-as-a-Service) ecosystems in GCP. You can make services available privately across different VPC networks within and across organizations.

VPC Network Peering enables you to peer VPC networks so that workloads in different VPC networks can communicate in private RFC 1918 space. Traffic stays within Google's network and doesn't traverse the public internet.

Working with Subnets

https://tools.ietf.org/html/rfc1918

https://cloud.google.com/vpc/docs/using-vpc#subnet-rules

Private/ Reserved IP address Ranges

Reserved

169.254.0.0 - 169.254.255.255 (APIPA only)

127.0.0.0-127.255.255.255 (loopback testing)

0.0.0.0-0.255.255.255 (Devices cant communicate in this range)

Private

192.168.0.0-192.168.255.255 (private)

172.16.0.0 - 172.31.255.255 (private)

10.0.0.0 -10.255.255.255(private)

Custom mode

Regions must be specified

IP address ranges must be specified

Subnets must be created

Auto to custom conversion is one way

https://cloud.google.com/vpc/docs/using-vpc#switch-network-mode

Considerations for auto-mode vs Custom Networks

https://cloud.google.com/vpc/docs/vpc#auto-mode-considerations

Custom-mode: Complete control over subnets, inc. regions, and IP ranges required

Custom-mode: Manual creation of subnets in each region could cause IP conflicts

Custom-mode: No auto creation of subnets in each region

Custom-mode the Google recommended approach for production networks

Custom-mode requires planning in advance

!Auto-mode!: You cannot connect auto-mode networks together using VPC Peering or Cloud VPN

Auto-mode: Predefined IP ranges do not overlap (no IP conflicts)

Auto-mode creates a subnet in each region automatically and as regions are subsequently added

Auto-mode is easy to set up and maintain

Auto-mode (default) & Custom available

A subnet defines a range of IP addresses

Subnets are regional resources

The relative priority of a firewall rule determines if it is applicable when evaluated against others. The evaluation logic works as follows:

Rules with the same priority and the same action have the same result. However, the rule that is used during the evaluation is indeterminate. Normally, it doesn't matter which rule is used except when you enable firewall rule logging. If you want your logs to show firewall rules being evaluated in a consistent and well-defined order, assign them unique priorities

A rule with a deny action overrides another with an allow action only if the two rules have the same priority. Using relative priorities, it is possible to build allow rules that override deny rules, and vice versa.

The highest priority rule applicable for a given protocol and port definition takes precedence, even when the protocol and port definition is more general. For example, a higher priority ingress rule allowing traffic for all protocols and ports intended for given targets overrides a lower priority ingress rule denying TCP 22 for the same targets.

The highest priority rule applicable to a target for a given type of traffic takes precedence. Target specificity does not matter. For example, a higher priority ingress rule for certain ports and protocols intended for all targets overrides a similarly defined rule for the same ports and protocols intended for specific targets.

Priority

Implied firewall rules permit all outbound traffic and deny all inbound

GCP firewall is stateful, where only initial traffic needs to be explicitly permitted and the return traffic for *this* flow between *these* two systems is automatically permitted

https://www.youtube.com/watch?v=W-YAQCP2Bdg

https://www.youtube.com/watch?v=jQc9P7xA_wU

https://www.youtube.com/watch?v=0hN-dyOV10c

https://cloud.google.com/load-balancing/images/choose-lb.svg

https://cloud.google.com/sdk/gcloud/reference/compute/project-info/add-metadata

https://cloud.google.com/sdk/gcloud/reference/compute/networks/create

https://cloud.google.com/iam/docs/granting-changing-revoking-access

https://cloud.google.com/blog/products/management-tools/scripting-with-gcloud-a-beginners-guide-to-automating-gcp-tasks

https://medium.com/google-cloud/bash-hacks-gcloud-kubectl-jq-etc-c2ff351d9c3b

https://cloud.google.com/sdk/docs/configurations

https://cloud.google.com/sdk/docs/properties

https://cloud.google.com/sdk/gcloud/reference/

https://cloud.google.com/sdk/gcloud/

Billing and management

Build centers of excellence

Get help from the experts

Purchase a support package

Implement cost controls

Plan for your capacity requirements

Analyse and export your bill

Set up billing and permissions

Know how resources are charged

Cloud Architecture

Plan your disaster recovery strategy

https://cloud.google.com/solutions/dr-scenarios-planning-guide

Design for high availability

To help maintain uptime for mission-critical apps, design resilient apps that gracefully handle failures or unexpected changes in load.

Favour managed services

https://cloud.google.com/solutions/best-practices-compute-engine-region-selection

Use GCP managed services to help reduce operational burden and total cost of ownership (TCO).

Plan your migration

Logging,monitoring, and operations

Embrace Devops and explore Site Reliability Engineering

https://landing.google.com/sre/workbook/toc/

https://landing.google.com/sre/sre-book/toc/index.html

Export your logs

Export logs to Cloud Storage, BigQuery, and Cloud Pub/Sub. Using filters, you can include or exclude resources from the export.

Set up and audit trail

Use Cloud Audit Logs to help answer questions like "who did what, where, and when" in your GCP projects.

Centralize logging and monitoring

Design Patterns: Exporting

https://cloud.google.com/solutions/design-patterns-for-exporting-stackdriver-logging

Networking & Security

Secure your apps and data

Control access to apps by using Cloud Identity-Aware Proxy (Cloud IAP) to verify user identity and the context of the request to determine if a user should be granted access.

Integrate Google Cloud Armor with the HTTP(S) load balancer to provide DDoS protection and the ability to blacklist and whitelist IP addresses at the network edge.

Use a GCP global HTTP(S) load balancer to support high availability and scaling for your internet-facing services.

Use VPC Service Controls to define a security perimeter around your GCP resources to constrain data within a VPC and help mitigate data exfiltration risks.

In addition to firewall rules and VPC isolation, use these additional tools to help secure and protect your apps:

Connect your enterprise network

If you need low-latency, highly available, enterprise-grade connections that enable you to reliably transfer data between your on-premises and VPC networks without traversing the internet connections to GCP, use Cloud Interconnect: Dedicated Interconnect provides a direct physical connection between your on-premises network and Google's network. Partner Interconnect provides connectivity between your on-premises and GCP VPC networks through a supported service provider. If you don't require the low latency and high availability of Cloud Interconnect, or you are just starting on your cloud journey, use Cloud VPN to set up encrypted IPsec VPN tunnels between your on-premises network and VPC. Compared to a direct, private connection, an IPsec VPN tunnel has lower overhead and costs.

Many enterprises need to connect existing on-premises infrastructure with their GCP resources. Evaluate your bandwidth, latency, and SLA requirements to choose the best connection option:

Centralize network control

A centralized network team can administer the network without having any permissions into the participating projects. Similarly, the project admins can manage their project resources without any permissions to manipulate the shared network.

Use Shared VPC to connect to a common VPC network.

Limit external access to resources that need it

manage traffic and firewall rules

Firewall rules are specific to a particular VPC network.

The firewall is stateful, which means that for flows that are permitted, return traffic is automatically allowed.

Use VPC to define your network

https://cloud.google.com/solutions/best-practices-vpc-design

Subnets are regional resources; each subnet is explicitly associated with a single region.

Each subnet in turn defines one or more IP address ranges.

VPC network consists of one or more partitions called subnetworks.

VPC networks themselves do not define IP address ranges.

VPC networks are global resources

Use VPCs and subnets to map out your network, and to group and isolate related resources

Identity and Access management

Define an organisation policy

Use the Organization Policy Service to get centralized and programmatic control over your organization's cloud resources. Cloud IAM focuses on who, providing the ability to authorize users and groups to take action on specific resources based on permissions. An organization policy focuses on what, providing the ability to set restrictions on specific resources to determine how they can be configured and used.

Delegate responsibility with groups and service accounts

We recommend collecting users with the same responsibilities into groups and assigning Cloud IAM roles to the groups rather than to individual users.

Control access to resources

Cloud IAM enables you to control access by defining who (identity) has what access (role) for which resource.

Apply the security principle of least privilege

Rather than directly assigning permissions, you assign roles. Roles are collections of permissions

Authorize your developers and IT staff to consume GCP resources

Migrate unmanaged accounts

Federate your identity provider

Synchronize your user directory with Cloud Identity

Manage your google identities

Cloud Identity is a stand-alone Identity-as-a-Service (IDaaS) solution

We recommend using fully managed Google accounts tied to your corporate domain name through Cloud Identity

Organisational setup

https://cloud.google.com/docs/enterprise/best-practices-for-enterprise-organizations

Automate project creation

Automation allows you to support best practices such as consistent naming conventions and labeling of resources

Use Cloud Deployment Manager, Ansible, Terraform, or Puppet

Specify your project structure

Create an organisation node

Define your resource hierarchy

https://cloud.google.com/docs/images/best-practices-for-enterprise-organizations.png