Floating topic

Sources

https://www.lexico.com/en

https://www.kdnuggets.com/gps.html

https://www.investopedia.com/terms/d/deep-learning.asp

https://skymind.ai/wiki/neural-network

http://www.b-eye-network.com/blogs/devlin/archives/2009/08/fathers_of_the_data_warehouse_3.php

https://www.investopedia.com/terms/d/data-warehousing.asp

https://www.investopedia.com/articles/basics/03/053003.asp

https://www.investopedia.com/terms/d/datamining.asp

https://hackerbits.com/data/history-of-data-mining/

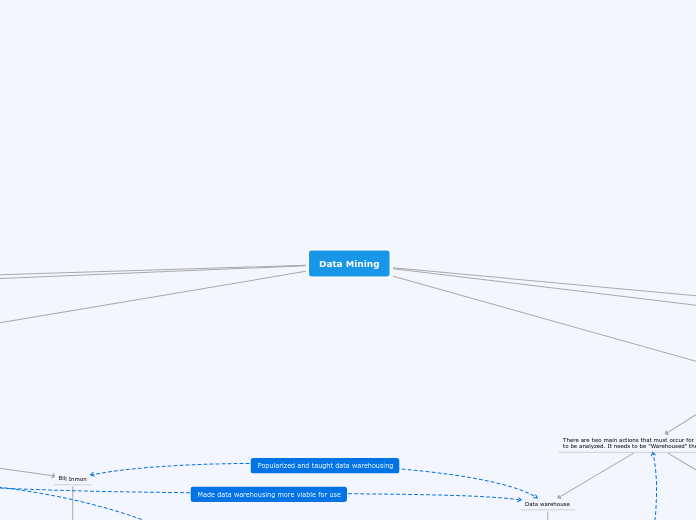

Data Mining

Importance

The uses before were just a handful of the many uses of data mining, and this is because of the nature of data mining. Data mining is the organization and use of data to solve uncertainties and predict outcomes, so as a knowledge thirsting species we would need something like data mining to be able to give us the information we need. In the modern age the important thing is looking towards the future by looking at our current data and this is what data mining is literally made to do. Data mining makes both data collection and organization extremely easy meaning it makes the hunt for knowledge easier as well.

How it is done

Example

A restaurant has a bunch of orders of food, the computer takes these orders and organize them all into different classes such as, the dish, who made the dish and the customer who ordered the dish. They can then get the customers opinion on the dish. They can then see patterns through this organized information and are able to discover who are the good and bad cooks, which customers are overall negative to then find a demographic of people who enjoy the restaurant and what dishes are the most overall enjoyable/ best selling. This information

There are two main actions that must occur for the raw data to be analyzed. It needs to be "Warehoused" then "Mined".

Mining

The process of mining is reading through the data warehouse created and looking for patterns that the analyzer then draws conclusions from.

Data warehouse

A data warehouse is an electronic way of storing and organizing large amounts of data in a way that is efficient and allows for easy data mining. First the raw data is given to the system then the software is able to compile the data. Once the software has finished compiling the data is "cleaned" (correcting or excluding any errors within the data). The data is then summarized by the system and organized into groups for easier use by the analyzers.

Uses

Science

Data is able to be used and organized way more efficient than before and the information is able to be more relevant. This allows for more accurate and better informing data analysis leading to overall better experiments for the use of researchers in all fields of science.

Businesses

Data mining is very important to the modern success of businesses as data mining is what tells a business its future and actions that it must take to succeed. Modern businesses need to take the least risky but most rewarding actions to become a larger more profiting business and the safest way to do this is through data mining. A computer is able to get all of the information at their disposal and can use it to predict the future of the company. Data analysts can then use the information given to them by the computer to make educated decisions for the company.

Investors

Investors use data mining very often when deciding which businesses to they should invest into. They use data mining because they are able to use current trends of the business to find a pattern, the pattern will usually show a growth or decline of the company and using this prediction investors are able to see if the investment would be a good or bad choice. The decision to invest is usually made from the yearly reports as they give you the data needed to asses the companies future.

Definition

Deep learning is a program very similar to machine learning but there is a huge difference between the two. Machine learning only gets better at something and perfecting what it is assigned to do, while deep learning is able to look for every option that may happen and does not stop searching for ways to get to the objective until it reaches the goal. Deep learning is very similar to a brain, as a human brain does many different methods to get to a goal and this is why deep learning is better for things like data mining than machine learning. Deep learning is able to look at every possibility their could be for the pattern while machine learning would find only one option but perfect it.

Non-Linear Classifier

Unlike linear classifiers which are able to draw a line that is suitable for organizing information, a non-linear classifier would be used to more accurately look at and organize data that is unable to be classified as a line, so you instead use a curved line that would allow for more accurate sorting and predictions.

Artificial Neural Network

Is a set of algorithms modeled by our brain, that can receive inputs, process inputs and and generate outputs. The use of an artificial neural network in this case is to sense patterns within the input it receives. It is able to do this by first sensing which pieces of information is useful to it and which it can be discarded, after cleaning through the data it is able to then look at all combinations between the data and look at patterns of the data. It can then use this pattern that it has found to predict future outcomes.

Independent and dependent varibles

A dependent variable is a piece of data that changes depending on the independent variable while the independent variable is something defined and does not change depending on something else.

Trend

The direction in which something is developing or changing.

Raw Data

Raw data is information in its purest form as it is not modified for easy use. (Basically data without the context behind it making it hard to see what it means)

Data mining is the analyzing of large blocks of raw data to discover trends or patterns that appear within the data. This most often is dealt through with the use of software that looks for patterns. These patterns allow for vast raw data to become organized and easy to understand. These patterns also predict future outcomes for the raw data as the computer can extend these trends to see the future outcomes.

Main Figures

Gregory Piatetsky-Shapiro

Is one of the main minds within the data mining scene as he is co-founder of two massive companies and conferences involved with the data science and data mining scene these being ACM SIGKDD (an association for data science and data mining) and KDD (conferences for the purpose of showcasing and learning about the data science and data mining field). Not only that he is also the president of KDnuggets a website specialized on data science and data mining news and discoveries. I will link KDnuggets below.

https://www.kdnuggets.com/

Bernhard E. Boser, Isabelle M. Guyon and Vladimir N. Vapnik

Were the ones to create the updated support vector machine.

Warren McCulloch and Walter Pitts

Warren McCulloch is an american neurophysiologist and with the help of Walter Pitts who specializes in computational neuroscience made the article "A logical calculus of the ideas immanent in nervous activity" creating the first conceptual model of a neural network.

Alan Turing

Created the idea for the modern computer which lead to the extreme advancements in technology needed for advanced calculations and data storage used today.

Barry Devlin and Paul Murphy

Barry Devlin and Paul Murphy are the fathers of Data warehousing as they are the ones who wrote the article "An architecture for a business and information system" in 1988 which was the first article to discuss the idea of Data warehousing in great detail. They wrote this article while under the american technology company IBM.

Bill Inmon

Bill Inmon is another person associated with founding data warehousing though it is more correct to say he was the first person to show of the potential and grow the idea of data warehousing. This is because of the book he created named "Building the data warehouse" which taught people how to create and use data warehouses efficiently. Bill Inmon ended up also being the first person to offer classes in data warehousing.

Thomas Bayes

Was an English statistician who created the Bayes' theorem.

Adrien-Marie Legendre

and Carl Friedrich Gauss

Adrien-Marie Legendre is a french mathematician who with the help of Carl Friedrich Gauss a German physicist and mathematician made ground breaking discoveries surrounding statistics and created the least square method and regression analysis.

History

The roots of data mining are deep in statistics and probabilities so most of the history surrounding data mining is the creation of theories that allow for the patterns within data to be spotted.

Present Day

Deep Learning

In recent years the biggest pursuit for the data mining community is deep learning. Deep learning would allow for all possible patterns and resulting predictions to be seen and would allow for us to see many more possibility with more accurate predictions.

1992

Non-Linear Classifiers

The update of the support vector machine (the machine that is able to analyze the information and recognize patterns) so that it would be able to use non-linear classifiers allowing for better sorting and more accurate predictions.

1990's

The term "database mining" becomes involved in the database community and businesses and investor begin to use it to look at trends that will increase their customer base and predict future customer demand and stocks.

1943

Neural Network

The creation of the conceptual model of the artificial neural network allowing for the idea of a computer being able to tell patterns from data to be introduced to the database community. This was through the article named "A logical calculus of the ideas immanent in nervous activity" and the paper is linked below.

https://link.springer.com/article/10.1007/BF02478259

1936

Universal Machine

Alan Turing created the paper named "On Computable Numbers" which discussed the idea of a Universal Machine able to run advanced calculations and was the pioneer of the idea of modern computers. The paper is linked beside this text-block.

https://londmathsoc.onlinelibrary.wiley.com/doi/abs/10.1112/plms/s2-42.1.230

1805

Regression Analysis

Regression analysis is the analysis of the relationship between the dependent and independent variables. They do this by using the method of least square, the method of least squares allows for the information to be placed on a line of best fit, this line will be able to tell the overall trend between the independent and dependent variables and allows for the user to predict future outcomes. In 1805 Adrien-Marie Legendre and Carl Friedrich Gauss used regression analysis to determine the orbits of bodies of the sun.

1763

Bayes' Theorem

Bayes theorem is the use of factors surrounding the topic that you want to get the probability of too have a more focused and informed probability. In simple terms it is able to get the probability of the effect the more it knows about the cause and factor that might change the effect.